Hello, I am your virtual assistant. How can I help you?

You've read this sentence dozens of times. Conversational agents are everywhere: on websites, in apps, on the other end of the phone. But there's a world of difference between a basic chatbot that goes round in circles and AI that can really solve your problems.

In this article, we demystify chatbots: what they are, how they work, and, most importantly, how to use them effectively in your customer service strategy.

A conversational agent is a computer program capable of communicating with humans in natural language. It can be text-based (chatbot) or voice-based (voicebot, voice assistant).

The goal: to enable users to obtain information or perform tasks through conversation, rather than through a traditional interface (forms, menus, navigation).

Conversational agents have been around since the 1960s (ELIZA, the first chatbot, dates back to 1966), but it was the advent of natural language processing (NLP) and large language models (LLMs) that made them truly useful.

The simplest ones. They operate on a system of keywords and predefined rules: "If the customer says X, respond with Y."

Advantages: easy to implement, predictable behavior, no risk of inappropriate responses.

Limitations: rigid, unable to handle unexpected formulations, frustrating experience when deviating from the script.

They use natural language processing to understand the intent behind the message, even if the wording varies.

Advantages: more flexible, better understanding of context, more natural experience.

Limitations: require training on data, may misinterpret certain requests.

The new generation. Based on LLMs such as GPT, they can generate original responses, understand complex contexts, and even reason.

Advantages: very natural conversations, ability to handle unprecedented cases, extensive customization.

Limitations: risk of hallucinations (false but plausible answers), need for safeguards, higher costs.

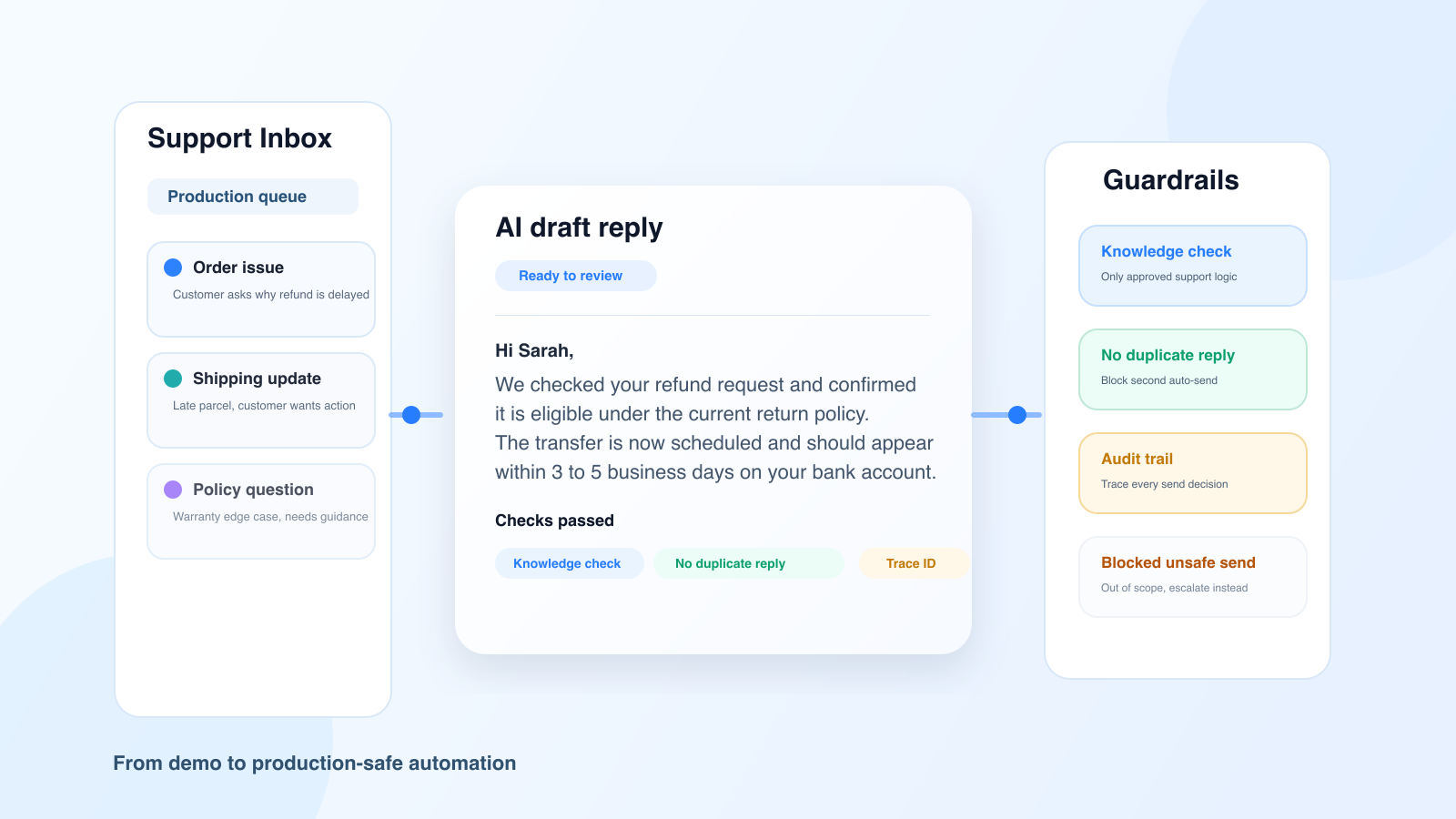

The ultimate evolution: agents capable not only of conversing but also of acting. They can query databases, trigger actions in other systems, and solve problems from start to finish.

This is the approach we are developing at Klark: AI agents that don't just answer questions but actually solve customers' problems.

The agent must first understand what the user is saying. The NLU (Natural Language Understanding) module analyzes the message to extract:

Once the intention is understood, the agent must decide what to do: respond directly, ask a clarifying question, transfer to a human, trigger an action, etc.

This dialogue logic can be simple (a decision tree) or complex (an AI model that manages the context of the conversation).

The agent formulates its response. It either draws on pre-written responses or generates an original response via an LLM.

The response must be clear, precise, and consistent with the brand's tone.

To be truly useful, the agent must connect to the company's systems: CRM to learn about the customer, database to check an order, API to trigger actions, etc.

This is often where chatbot projects fail: the agent can converse but cannot do anything concrete.

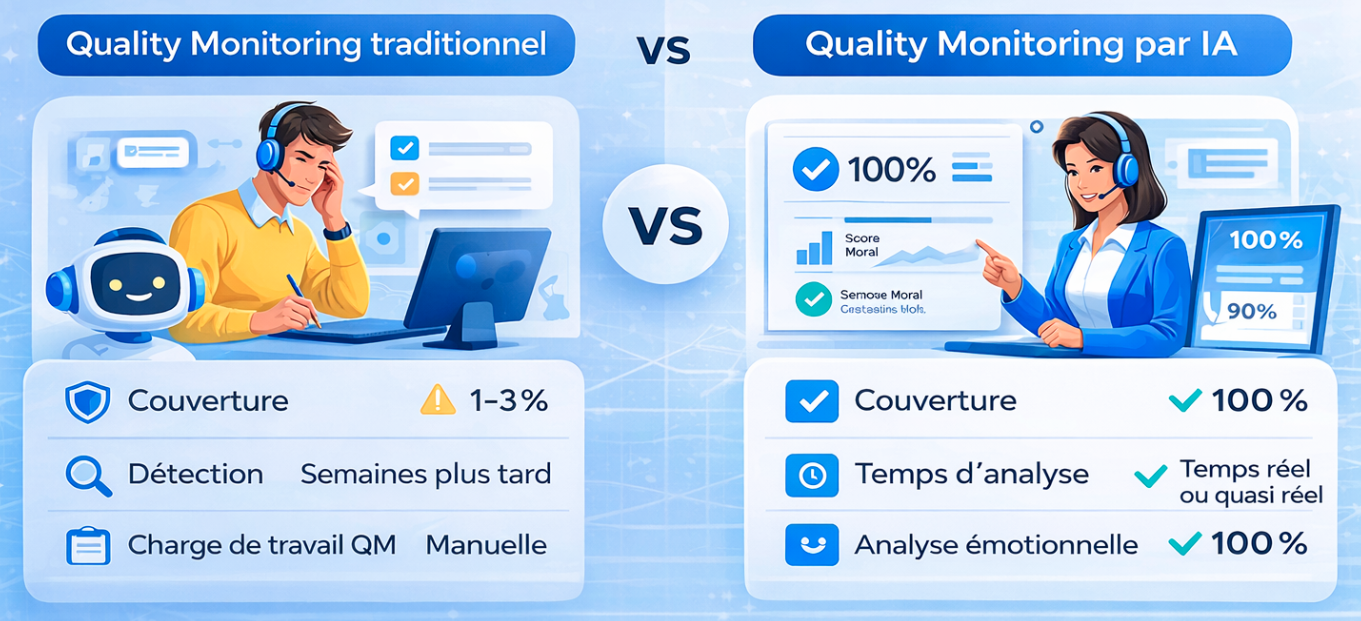

The chatbot allows customers to get answers at any time, without waiting. Frequently asked questions, order tracking, product information... Simple requests are handled instantly.

Before transferring to a human agent, the agent can qualify the request, collect the necessary information, and route it to the right department. The customer saves time, and the human agent receives a complete file.

The chatbot can also assist human agents by suggesting responses, summarizing customer history, searching the knowledge base, etc. This is known as "co-pilot" mode.

The holy grail: the conversational agent that solves the problem from start to finish. Not just answering the question, but canceling the order, issuing the refund, changing the reservation...

That's what we do at Klark: our AI agents don't just chat, they take action within systems to actually resolve requests.

A poorly designed chatbot does more harm than good. Customers who go round in circles without finding the answer they are looking for end up calling, even more annoyed.

Solution: Provide clear exit paths to a human, do not force interaction with the bot.

An agent that appears human but is not entirely human can create discomfort. Be transparent: state that it is a bot, and acknowledge its limitations.

LLM-based agents can invent information. This is problematic when it comes to reimbursement conditions or product availability.

Solution: anchor the agent to sources of truth (knowledge base, documentation), and put safeguards in place.

Automating everything is not desirable. Some situations require empathy and human judgment: sensitive complaints, VIP customers, complex cases.

The chatbot must know when to hand over to a human agent.

What do you want to achieve? Reduce contact volume? Improve availability? Speed up resolution? Vague goals lead to vague projects.

Don't try to automate everything at once. Identify the 5-10 most frequent and simplest requests. Automate them thoroughly before expanding.

A chatbot is only as good as the knowledge you give it. Invest in your knowledge base, FAQs, and documentation.

The agent must be able to easily hand over to a human. And the human must receive the context of the conversation so that the customer does not have to repeat themselves.

Track KPIs: automatic resolution rate, escalation rate, post-interaction satisfaction, resolution time. Analyze unsuccessful conversations and make improvements.

Advances in generative AI are opening up new possibilities:

At Klark, we are working on these boundaries: AI agents that don't just converse, but become true customer service collaborators.

Conversational agents are no longer a novelty but an essential component of modern customer service. When deployed effectively, they enhance the customer experience while optimizing costs.

The keys to success:

Want to find out what an AI agent can do for your customer service? Request a demo of Klark.